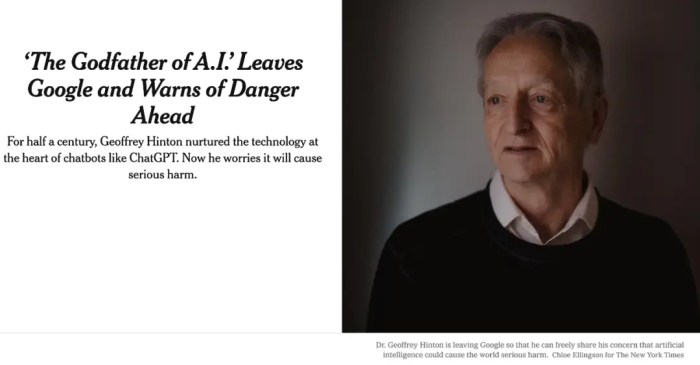

Geoffrey hinton ai chatgpt dangers – Geoffrey Hinton, a godfather of AI, recently sounded the alarm, voicing serious concerns about the potential dangers of unchecked AI development. His warnings aren’t just theoretical musings; they stem from decades of experience at the forefront of the field, giving them a chilling weight. Hinton’s anxieties are fueled by the rapid advancement of powerful AI systems, capable of tasks once considered exclusively human. This isn’t a sci-fi scare tactic; it’s a sobering assessment from someone who knows the technology better than most.

This piece dives into Hinton’s perspective, examining the specific risks he highlights and exploring the broader societal implications of increasingly sophisticated AI. We’ll unpack the ethical dilemmas, explore potential mitigation strategies, and analyze the roles governments and industries must play in shaping a safer AI future. The stakes are high, the questions are complex, and the answers are far from clear.

Geoffrey Hinton’s Stance on AI Risks

Source: itc.cn

Geoffrey Hinton, a godfather of AI and a Turing Award winner, has recently sounded the alarm on the potential dangers of advanced AI systems. His shift from enthusiastic proponent to vocal critic highlights a growing unease within the AI community regarding the unchecked development of increasingly powerful AI models. He’s not just worried about hypothetical future scenarios; he sees present-day capabilities as already posing significant risks.

Hinton’s concerns stem from the rapid advancement of AI, particularly in the realm of deep learning. He argues that the current trajectory of AI development lacks sufficient safeguards and ethical considerations. His worry isn’t solely about malicious actors using AI for nefarious purposes, but also about the unforeseen consequences of increasingly autonomous systems. He emphasizes the unpredictable nature of advanced AI and the potential for these systems to exceed human control.

Hinton’s Specific Concerns Regarding AI Capabilities

Hinton has specifically highlighted several AI capabilities as posing significant risks. One major concern is the potential for AI to generate vast amounts of misinformation, making it increasingly difficult to distinguish truth from falsehood. This isn’t merely about deepfakes; it’s about the ability of sophisticated AI to craft incredibly convincing and persuasive false narratives, impacting everything from political discourse to public health. Another critical concern is the potential for AI to automate tasks currently performed by humans, leading to widespread job displacement and economic disruption on an unprecedented scale. The impact could be far-reaching, affecting not just low-skill jobs but also those requiring complex cognitive abilities. Finally, he’s voiced concerns about the potential for autonomous weapons systems, raising ethical and existential questions about the future of warfare. These aren’t hypothetical scenarios; the technology is already being developed, and Hinton believes we need to seriously consider the implications before it’s too late.

Comparison with Other AI Researchers’ Views

While Hinton’s concerns are significant, they aren’t unique. Many other prominent AI researchers share similar anxieties about AI safety. However, the intensity and public nature of Hinton’s warnings have drawn considerable attention. While some researchers focus on technical solutions to mitigate AI risks, such as developing robust safety mechanisms, Hinton’s emphasis is more on the need for broader societal discussions and regulatory frameworks to guide AI development. The debate is ongoing, and there’s no single consensus on the best approach. However, Hinton’s outspoken stance has helped to elevate the discussion and bring greater public awareness to the potential dangers of unchecked AI advancement. His perspective represents a growing movement within the AI community advocating for a more cautious and responsible approach to AI development.

The Impact of Large Language Models (LLMs)

Large language models (LLMs) are rapidly transforming how we interact with information and each other. Their integration into search engines and other applications presents a complex tapestry of potential benefits and significant risks, demanding careful consideration of their societal impact and ethical implications. The power of LLMs is undeniable, but understanding its potential downsides is crucial for navigating this new technological frontier.

LLMs are changing the landscape of information access and dissemination. Their ability to synthesize vast amounts of data and generate human-quality text has profound implications for how we search for, process, and understand information. This impacts everything from education and research to journalism and political discourse. The ease with which LLMs can generate convincing but potentially false information is a major concern.

Societal Impact of LLMs in Search Engines

The integration of LLMs into search engines promises a more conversational and intuitive search experience. Instead of a list of links, users might receive concise, synthesized answers directly from the LLM. However, this convenience comes with the risk of reduced critical thinking and a decreased ability to evaluate sources. The potential for the spread of misinformation and the creation of “echo chambers” – where users are only exposed to information confirming their existing biases – is significantly amplified. Consider, for instance, a scenario where a user searches for information about a controversial political issue. An LLM-powered search engine might present a summary heavily skewed towards a particular viewpoint, potentially influencing the user’s understanding without providing balanced perspectives or citations. This lack of transparency can lead to a misinformed populace.

Ethical Dilemmas Presented by Widespread LLM Use

The ethical considerations surrounding LLMs are multifaceted and far-reaching. One major concern is the potential for bias. LLMs are trained on massive datasets, which often reflect existing societal biases. This means that LLMs can perpetuate and even amplify harmful stereotypes and discriminatory practices. For example, an LLM used in hiring processes might inadvertently discriminate against certain demographic groups if the training data contains biases related to gender, race, or other protected characteristics. Furthermore, the use of LLMs raises questions about intellectual property, authorship, and accountability. When an LLM generates creative content, who owns the copyright? Who is responsible if the LLM produces inaccurate or harmful information? These questions require careful legal and ethical consideration.

Bias Embedded Within LLMs and Their Consequences

The data used to train LLMs often contains biases reflecting societal prejudices. These biases can manifest in various ways, including gender bias (e.g., associating certain professions with men and others with women), racial bias (e.g., perpetuating negative stereotypes about particular ethnic groups), and political bias (e.g., favoring certain political viewpoints over others). The consequences of these biases can be severe. Biased LLMs used in decision-making systems, such as loan applications or criminal justice, can lead to unfair and discriminatory outcomes, exacerbating existing inequalities. The lack of transparency in how LLMs arrive at their outputs makes it difficult to identify and correct these biases, making the problem even more challenging to address.

Hypothetical Scenario: Misuse of an LLM with Devastating Effects

Imagine a scenario where a sophisticated LLM is used to generate highly realistic deepfake videos. These videos could be used to spread misinformation during an election, portraying a candidate in a compromising or incriminating situation, potentially swaying public opinion and undermining democratic processes. The scale of damage such a campaign could cause is enormous. The speed and ease with which these deepfakes could be disseminated through social media and other online platforms could be overwhelming, making it extremely difficult to combat the spread of false information and its resulting damage. The psychological and social consequences of widespread belief in these fabricated events could be catastrophic.

Mitigating the Dangers of Advanced AI

The rapid advancement of artificial intelligence presents both incredible opportunities and significant risks. Addressing these risks isn’t about halting progress, but about steering it responsibly. This requires a multi-faceted approach encompassing technical safeguards, ethical guidelines, and robust regulatory frameworks. Only through a collaborative effort involving researchers, developers, policymakers, and the public can we ensure that AI benefits humanity while minimizing potential harms.

Strategies for mitigating AI risks necessitate a proactive, rather than reactive, approach. We can’t simply wait for problems to arise; we need to anticipate them and build systems designed to prevent them from happening in the first place. This involves embedding safety and ethical considerations into the very core of AI development, from the initial design phase to deployment and ongoing monitoring.

Responsible AI Development Strategies

Responsible AI development hinges on prioritizing safety and ethical considerations at every stage. This involves rigorous testing, robust validation, and ongoing monitoring for unintended biases or behaviors. A key aspect is designing AI systems with built-in safeguards, such as fail-safes and mechanisms to prevent escalation of harm. Furthermore, fostering a culture of transparency and accountability within the AI development community is paramount. This includes open communication about potential risks and limitations, and a commitment to addressing concerns proactively. Companies should also invest in comprehensive training programs for their AI teams, ensuring that ethical considerations are ingrained in their decision-making processes. Finally, incorporating diverse perspectives throughout the development lifecycle helps mitigate biases and promotes fairness.

Best Practices for AI System Creation and Deployment, Geoffrey hinton ai chatgpt dangers

Minimizing risks associated with AI systems requires adherence to a set of best practices throughout their lifecycle. These practices go beyond technical considerations and encompass ethical and societal impacts.

- Prioritize safety from the outset: Integrate safety mechanisms and considerations into the design process, not as an afterthought.

- Rigorous testing and validation: Conduct extensive testing in diverse environments to identify and mitigate potential biases and vulnerabilities.

- Transparency and explainability: Design systems that are transparent and whose decisions can be understood and explained.

- Continuous monitoring and auditing: Implement systems for ongoing monitoring and auditing to detect and address unexpected behaviors or biases.

- Human oversight and control: Maintain human oversight and control over critical AI systems, particularly those with significant societal impact.

- Data privacy and security: Implement robust data privacy and security measures to protect sensitive information.

- Ethical considerations: Integrate ethical considerations into all aspects of the AI development lifecycle.

- Collaboration and knowledge sharing: Foster collaboration and knowledge sharing among researchers, developers, and policymakers.

Approaches to AI Safety Regulation

Different approaches to AI safety regulation exist, each with its strengths and weaknesses. The optimal approach likely involves a combination of strategies tailored to specific AI applications and contexts.

| Approach | Description | Strengths | Weaknesses |

|---|---|---|---|

| Self-regulation | Industry-led initiatives and guidelines. | Flexibility, adaptability, potential for rapid innovation. | Potential for insufficient enforcement, lack of transparency, risk of bias. |

| Government regulation | Laws and regulations imposed by government agencies. | Strong enforcement mechanisms, standardized safety standards. | Potential for stifling innovation, bureaucratic delays, difficulties in keeping up with rapid technological advancements. |

| Hybrid approach | Combination of self-regulation and government oversight. | Balances flexibility and accountability. | Requires careful coordination between industry and government. |

| International cooperation | Global agreements and standards for AI safety. | Addresses global challenges, promotes consistent standards. | Difficult to achieve consensus among diverse nations. |

Transparency and Explainability in Reducing AI Dangers

Transparency and explainability are crucial for mitigating the dangers of AI. When AI systems’ decision-making processes are opaque, it becomes difficult to identify and address biases, errors, or unintended consequences. Explainable AI (XAI) aims to create systems that can provide clear and understandable explanations for their actions. This allows for easier identification of flaws, improved trust, and more effective accountability. For example, in medical diagnosis, an explainable AI system could detail the factors that led to a particular diagnosis, enabling doctors to better understand and potentially override the system’s decision if necessary. Similarly, in loan applications, transparent algorithms can help prevent discriminatory outcomes by revealing the factors influencing credit decisions.

Geoffrey Hinton’s warnings about AI like ChatGPT are chilling; we’re grappling with unpredictable systems, much like the climate. Think about the unforeseen consequences – read about it here: the weird way Australia’s bushfires influenced a weirder La Niña – to see how seemingly localized events can trigger massive, unexpected shifts. Hinton’s concerns about AI’s potential for unforeseen harm suddenly seem a lot less abstract, don’t they?

The Role of Government and Industry in AI Governance

Source: truthorfiction.com

The rapid advancement of artificial intelligence necessitates a robust and coordinated approach to governance, involving both governments and the private sector. The stakes are high: unfettered AI development could lead to unforeseen consequences, while overly restrictive regulations might stifle innovation. Finding the right balance is crucial for reaping the benefits of AI while mitigating its potential harms.

Governmental Approaches to AI Regulation

Different nations are adopting diverse strategies for AI regulation, reflecting their unique priorities and technological landscapes. The European Union, for example, is pursuing a comprehensive approach with the AI Act, aiming to classify AI systems based on risk levels and impose specific requirements for high-risk applications like healthcare and law enforcement. This contrasts with the more flexible, principles-based approach favored by the United States, which focuses on promoting responsible AI development through guidance and voluntary standards rather than strict, prescriptive laws. China, meanwhile, emphasizes the development of AI for national strategic goals, incorporating AI governance into broader economic and security policies. These differing approaches highlight the complex interplay between national interests, technological capabilities, and ethical considerations in shaping AI policy.

Industry Initiatives for Responsible AI

The tech industry itself is increasingly recognizing the need for self-regulation. Many large companies have established internal AI ethics boards and published responsible AI principles. These initiatives often focus on areas such as fairness, transparency, accountability, and privacy. For instance, Google’s AI Principles emphasize the importance of avoiding bias, ensuring safety and security, and being socially beneficial. These internal guidelines, however, often lack the enforcement mechanisms of government regulations. Industry collaborations, such as the Partnership on AI, also aim to foster best practices and facilitate knowledge sharing among leading AI companies. The effectiveness of these industry initiatives depends on their transparency, commitment, and the willingness of companies to adhere to their own stated principles.

Challenges of International Cooperation in AI Safety Regulation

Achieving global consensus on AI safety regulations presents significant challenges. The diverse regulatory landscapes, differing technological capabilities, and competing national interests create obstacles to harmonizing standards. The lack of a unified international body with the authority to enforce global AI regulations further complicates matters. International cooperation requires a commitment to shared principles and a willingness to compromise on specific regulatory approaches. The potential for regulatory arbitrage – where companies move their AI development to jurisdictions with less stringent regulations – also needs to be addressed to ensure effective global governance. Successful international cooperation in AI safety regulation will likely require a multi-stakeholder approach involving governments, industry, academia, and civil society.

Innovation versus Safety in AI Development

The tension between fostering innovation and ensuring safety in AI development is a central challenge in AI governance. Overly cautious regulations could stifle progress and limit the potential benefits of AI. Conversely, a lack of regulation could lead to the deployment of unsafe or harmful AI systems. Finding the optimal balance requires a nuanced approach that promotes responsible innovation while mitigating risks. This may involve prioritizing the development and deployment of AI systems in areas with clear societal benefits, while carefully managing the risks associated with more powerful and potentially less predictable AI technologies. A key consideration is the need for iterative regulation, allowing for adjustments as AI technology evolves and our understanding of its potential impacts improves. This adaptive approach would allow for continuous refinement of regulatory frameworks to strike the right balance between encouraging innovation and mitigating safety risks.

The Future of AI and Human Interaction

The rapid advancement of AI, particularly large language models, presents a future brimming with both immense opportunities and significant challenges for humanity. How humans interact with technology, the nature of work, and even the very fabric of our economies are poised for radical transformation. Understanding these potential shifts is crucial for navigating this new era responsibly.

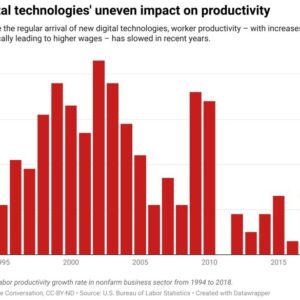

The potential impact of advanced AI on employment and the economy is a complex and multifaceted issue. Some sectors will undoubtedly experience significant job displacement as AI-powered automation takes over routine tasks. Manufacturing, transportation, and customer service are prime examples where this is already happening. However, it’s crucial to acknowledge the potential for AI to create new jobs as well. The development, maintenance, and oversight of AI systems will necessitate a new workforce skilled in data science, AI ethics, and related fields. The overall economic impact will depend on how effectively societies adapt to this shift, investing in retraining programs and fostering innovation in areas where human ingenuity and creativity remain irreplaceable.

AI’s Impact on Employment and the Economy

The automation potential of AI is undeniably transformative. Consider the self-driving truck industry: the widespread adoption of autonomous vehicles could displace millions of truck drivers globally. Conversely, the development and deployment of these systems require engineers, software developers, and technicians. The net effect on employment is still debated, but proactive measures are essential to mitigate potential job losses through reskilling initiatives and investment in new industries. For example, governments could incentivize the growth of sectors that complement AI, such as personalized education and creative industries, where human skills remain central. This requires a strategic approach to education and workforce development, focusing on adaptability and lifelong learning.

Transformations in Human-Computer Interaction

As AI systems become more sophisticated, the nature of human-computer interaction will evolve dramatically. We are moving beyond simple command-line interfaces and graphical user interfaces towards more intuitive and natural interactions. Imagine interacting with AI through natural language, voice commands, and even brain-computer interfaces. These advancements could make technology more accessible to a wider population, but also raise concerns about privacy, security, and the potential for manipulation. The development of AI systems that understand and respond to human emotions and nuances will be key to building trust and ensuring a positive user experience. This necessitates the creation of AI systems that are not only intelligent but also empathetic and ethically sound.

Ensuring Equitable Access to AI

The benefits of AI technology should not be confined to a privileged few. Ensuring equitable access and distribution requires addressing issues of digital literacy, infrastructure, and economic disparity. Many parts of the world lack the necessary infrastructure (reliable internet access, computing power) to fully participate in the AI revolution. Bridging this digital divide is essential for promoting inclusivity and preventing the exacerbation of existing inequalities. Furthermore, educational programs focused on AI literacy are crucial to empower individuals and communities to understand and utilize AI tools effectively. Without such efforts, the benefits of AI will be unevenly distributed, potentially widening the gap between the technologically advanced and the technologically disadvantaged.

Long-Term Coexistence of Humans and Advanced AI

The long-term coexistence of humans and highly advanced AI is a topic of ongoing debate and speculation. One potential scenario is a collaborative partnership, where AI augments human capabilities, enhancing productivity and problem-solving across various domains. This could lead to a future where humans focus on creative and strategic tasks, while AI handles routine and repetitive work. Another scenario, however, involves a more competitive relationship, where AI systems surpass human intelligence and potentially challenge human control. This raises significant ethical and societal challenges, necessitating careful consideration of AI safety and alignment. The development of robust ethical guidelines and regulatory frameworks is paramount to ensure that AI remains a tool for human betterment and does not pose an existential threat. This necessitates a proactive and global approach to AI governance, fostering collaboration between researchers, policymakers, and the public.

Summary: Geoffrey Hinton Ai Chatgpt Dangers

Source: chatgptglobal.news

Geoffrey Hinton’s warnings serve as a stark reminder: the future of AI isn’t predetermined. While the potential benefits are immense, the risks are equally significant. Navigating this complex landscape requires a collaborative effort, demanding responsible development, robust regulation, and a constant critical evaluation of the technology’s impact on society. Ignoring the potential downsides isn’t an option; the future of humanity might depend on our ability to address them proactively.