AI deepfakes companies executives academics: The rise of hyperrealistic deepfakes is shaking things up, blurring the lines between reality and fabrication. Imagine a CEO’s voice used to authorize a fraudulent transaction, or a fabricated video destroying a company’s reputation. This isn’t science fiction; it’s a growing threat impacting businesses, leaders, and the very fabric of trust. This exploration dives into the complex interplay between cutting-edge AI technology, corporate vulnerabilities, and the crucial role academics play in navigating this uncharted territory.

From the technical intricacies of deepfake creation to the legal and ethical minefields they create, we’ll unpack the challenges and potential solutions. We’ll examine how companies are fortifying their defenses, the strategies executives are employing to protect themselves, and the critical research being conducted in academic labs. Ultimately, this journey will illuminate the collaborative efforts needed to mitigate the risks and harness the potential of this powerful, yet potentially dangerous, technology.

AI Deepfake Technology

The world of artificial intelligence is rapidly evolving, and one of its most fascinating and potentially disruptive creations is the deepfake. These hyperrealistic manipulated videos and audio recordings, once a niche technological marvel, are becoming increasingly sophisticated and accessible, raising significant ethical and societal concerns. Understanding the current state and future trends of deepfake technology is crucial for navigating its complex implications.

Deepfake technology’s evolution has been remarkably swift. Early attempts, relying on simpler algorithms, produced noticeably artificial results. However, advancements in machine learning, particularly deep learning techniques like generative adversarial networks (GANs), have dramatically improved the realism and subtlety of deepfakes. Key milestones include the development of more robust GAN architectures capable of handling high-resolution videos and audio, along with improvements in facial expression and lip-sync synchronization. The accessibility of deepfake creation tools, both through open-source software and commercial platforms, has further accelerated the technology’s spread.

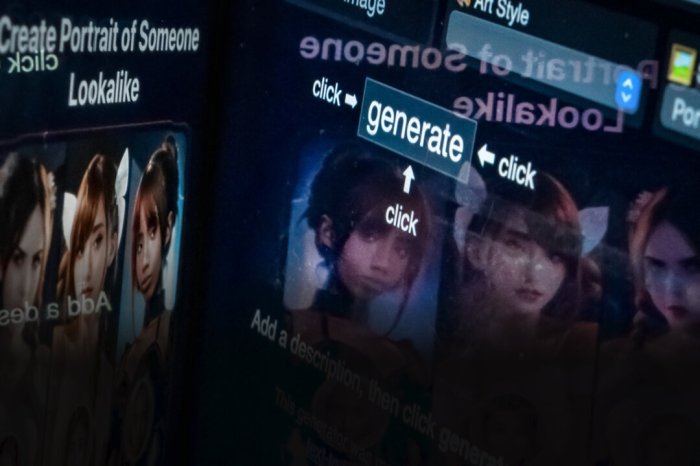

Deepfake Creation Methods

Several methods exist for creating deepfakes, each with varying levels of complexity and realism. Early methods often involved simple face-swapping techniques, overlaying one person’s face onto another’s body in a video. More sophisticated approaches leverage deep learning models trained on massive datasets of images and videos. These models learn the intricate nuances of facial features, expressions, and movements, enabling the creation of incredibly convincing deepfakes. The sophistication of these methods is continuously improving, with newer techniques focusing on improving the fidelity of audio and video synchronization, and handling more complex scenarios like changes in lighting and viewpoint. The difference between a simple face swap and a sophisticated deepfake created using advanced GANs is akin to the difference between a crude charcoal sketch and a photorealistic painting.

Ethical Implications of Deepfake Technology

The rapid advancement of deepfake technology presents a plethora of ethical challenges. The potential for misuse is immense, ranging from the creation of non-consensual pornography to the spread of misinformation and political manipulation. Deepfakes can be used to damage reputations, incite violence, and erode trust in institutions. The difficulty in distinguishing real from fake content poses a significant threat to the integrity of information and the social fabric. Moreover, the ease of creating deepfakes raises concerns about accountability and the potential for malicious actors to operate with impunity. The lack of clear legal frameworks and robust detection mechanisms further exacerbates these concerns.

Hypothetical Corporate Misuse of Deepfakes

Imagine a scenario where a competitor uses deepfake technology to create a video of a company CEO admitting to corporate wrongdoing or revealing sensitive financial information. This fabricated video, shared widely online, could severely damage the company’s reputation, leading to a significant drop in stock prices, loss of investor confidence, and potential legal repercussions. The authenticity of the deepfake could be incredibly difficult to disprove, even with forensic analysis, causing widespread confusion and uncertainty. Such a scenario highlights the urgent need for robust deepfake detection technologies and proactive measures to mitigate the risks associated with this powerful technology.

Deepfakes and Corporate Executives

The rise of sophisticated AI deepfake technology presents a significant and growing threat to corporate executives. These hyperrealistic fabricated videos and audio recordings can be weaponized for malicious purposes, leading to severe financial losses, reputational damage, and even legal repercussions. Understanding the vulnerabilities and implementing robust mitigation strategies is crucial for modern businesses.

Deepfake attacks against corporate executives exploit several key vulnerabilities. Executives often occupy positions of authority and trust, making them prime targets for convincing impersonations. Their public profiles, readily available online, provide ample material for deepfake creators to learn their mannerisms, speech patterns, and even facial expressions. This readily available information makes creating convincing deepfakes easier and more effective.

Examples of Malicious Deepfake Use Against Executives

Deepfakes can be used to orchestrate various malicious activities targeting corporate executives. For example, a deepfake video of a CEO authorizing a large, unauthorized wire transfer could easily fool finance departments. Similarly, a deepfake audio recording of an executive making inflammatory or damaging statements could severely impact a company’s reputation and stock price. Consider a scenario where a deepfake is used to convince a supplier to release sensitive data or to make a damaging public statement. The consequences can be devastating, leading to significant financial losses and legal battles. Another example could involve a deepfake of an executive engaging in unethical or illegal activities, potentially leading to investigations and reputational harm.

Proactive Measures to Detect and Prevent Deepfake Attacks

Companies can take several proactive steps to mitigate the risks associated with deepfakes targeting their executives. Implementing a multi-layered approach is key. This includes rigorous employee training programs that focus on deepfake awareness and identification techniques. Employees should be educated on how to spot inconsistencies in videos and audio recordings, such as unnatural blinking, subtle inconsistencies in lighting or background, and unnatural lip synchronization. Furthermore, companies should invest in robust deepfake detection technologies, regularly updating their systems to stay ahead of evolving techniques. The use of strong authentication methods beyond simple passwords, such as multi-factor authentication (MFA) and biometric verification, is crucial. Finally, establishing clear internal protocols for verifying sensitive requests, such as large financial transactions or sensitive data releases, can help prevent fraud.

Comparison of Deepfake Detection Technologies

| Technology | Effectiveness | Strengths | Weaknesses |

|---|---|---|---|

| Video and Audio Analysis Software | Moderate to High (depending on sophistication) | Can detect inconsistencies in facial expressions, lip synchronization, and audio artifacts. | Can be bypassed by highly sophisticated deepfakes; requires regular updates. |

| Blockchain-based Authentication | High | Provides tamper-proof verification of video and audio authenticity. | Implementation can be complex and expensive. |

| AI-powered Deepfake Detection | High (continuously improving) | Can identify subtle anomalies undetectable by human eyes. | Requires significant computing power and ongoing model training. |

| Multi-Factor Authentication (MFA) | High (when combined with other methods) | Adds an extra layer of security beyond passwords. | Can be bypassed if other security measures are weak. |

Academics’ Role in Deepfake Research and Development: Ai Deepfakes Companies Executives Academics

Source: theepochtimes.com

AI deepfakes are a serious concern for companies, executives, and academics alike, demanding proactive measures. Understanding the evolving threat landscape is crucial, and Anne Neuberger’s insights on cybersecurity, as detailed in this insightful Q&A anne neuberger cybersecurity q and a , offer valuable perspective. This knowledge empowers companies, executives, and academics to better navigate the complex challenges posed by this technology.

The rise of deepfake technology has spurred a parallel surge in academic research dedicated to understanding, detecting, and mitigating its potential harms. Universities and research labs worldwide are contributing significantly to the development of countermeasures, pushing the boundaries of computer vision, machine learning, and digital forensics. Their work is crucial in shaping the future of this rapidly evolving field, informing policy, and providing tools to combat the spread of misinformation.

Academic researchers are at the forefront of developing sophisticated deepfake detection and mitigation techniques. Their contributions span various areas, including the creation of robust detection algorithms, the development of new data augmentation strategies for training more resilient models, and the exploration of novel methods for identifying subtle artifacts indicative of deepfake manipulation. This research isn’t just theoretical; it’s actively shaping the tools and techniques used by tech companies and law enforcement to combat deepfakes.

Prominent Academic Institutions Involved in Deepfake Research

Many leading universities and research institutions are actively involved in deepfake research. The collaborative nature of this field means that breakthroughs often stem from international partnerships and the sharing of datasets and methodologies. A strong focus exists on developing techniques that can accurately identify manipulated media, even in the face of increasingly sophisticated deepfake creation methods. The race is on to stay ahead of the curve.

Challenges Faced by Academics in Deepfake Research

Academic deepfake research faces significant ethical and technical limitations. Ethically, researchers grapple with the responsible use of deepfake technology itself. Creating high-quality deepfakes for research purposes requires careful consideration of potential misuse and the need for robust safeguards to prevent malicious actors from accessing or exploiting these techniques. Technically, the sheer scale of data required to train effective detection models, coupled with the ever-evolving nature of deepfake creation methods, presents a constant challenge. The “arms race” between deepfake creators and detectors demands continuous innovation and adaptation. Access to large, high-quality datasets of both real and manipulated media is often limited due to privacy concerns and the difficulty of acquiring authentic deepfakes for analysis.

Approaches to Addressing the Deepfake Problem

Different research groups are employing diverse approaches to tackle the deepfake problem. Some focus on developing advanced detection algorithms based on subtle inconsistencies in facial expressions, lighting, or video artifacts. Others concentrate on improving the robustness of existing detection methods by incorporating more sophisticated machine learning techniques and exploring the use of multimodal data (combining visual and audio analysis). Still others are investigating the development of new forensic techniques to identify the source of deepfake videos or the tools used to create them. The diversity of approaches highlights the complexity of the problem and the need for a multi-faceted solution. For instance, some researchers are exploring blockchain technology to create tamper-proof records of media authenticity, while others are working on developing digital watermarks that can be embedded in videos to indicate manipulation.

The Interplay Between Companies, Executives, and Academics

The fight against deepfakes requires a united front, a powerful synergy between the innovative minds of academia and the resources and reach of industry. This isn’t just about detecting fake videos; it’s about building a robust ecosystem of defense against a rapidly evolving threat to societal trust and security. The collaborative efforts between companies, their executives, and academic researchers are crucial in developing and deploying effective countermeasures.

The effectiveness of combating deepfakes hinges on the seamless exchange of knowledge and technology between the corporate and academic worlds. Companies possess vast datasets, computing power, and real-world deployment experience, while universities provide cutting-edge research, theoretical frameworks, and a pipeline of talented individuals. Bridging this gap is not merely beneficial; it’s essential for staying ahead of the curve in this technological arms race.

Successful Partnerships in Deepfake Countermeasures

Several successful collaborations exemplify the power of industry-academia partnerships in the deepfake domain. For instance, partnerships between tech giants like Microsoft and leading universities specializing in computer vision and AI have yielded significant advancements in deepfake detection algorithms. These collaborations often involve joint research projects, shared data sets, and the development of open-source tools and resources. Another example could involve a cybersecurity firm collaborating with a university’s media forensics lab to develop a robust deepfake detection system for social media platforms. These partnerships leverage the strengths of both parties: the company provides the practical application context and resources, while the university contributes theoretical breakthroughs and specialized expertise.

Knowledge Transfer and Technology Development

The potential for knowledge transfer and technology development through these collaborations is immense. Academic research can inform the development of more effective deepfake detection tools used by companies, while real-world data and deployment challenges faced by companies can help refine and focus academic research. This reciprocal exchange leads to faster innovation and more robust solutions. For example, a university’s research on subtle visual cues indicative of deepfakes could directly improve the accuracy of a company’s deepfake detection software. Conversely, the company’s experience in deploying such software at scale can highlight areas where academic research needs to be improved or adapted.

A Framework for Effective Communication and Knowledge Sharing

Establishing a robust framework for communication and knowledge sharing is paramount. This framework should involve: regular workshops and conferences to facilitate the exchange of information; the creation of shared databases and repositories of deepfake data for research and development; joint research projects with clearly defined goals, deliverables, and intellectual property rights; and open-source initiatives to promote wider adoption and collaborative improvement of deepfake detection technologies. Moreover, funding mechanisms that encourage and support these collaborations are crucial for fostering a sustainable and productive partnership between industry and academia. This could include government grants specifically aimed at deepfake countermeasures research, as well as corporate sponsorship of university research projects.

Legal and Regulatory Frameworks for AI Deepfakes

The rise of AI deepfakes presents a novel challenge to existing legal and regulatory frameworks. While laws concerning defamation, fraud, and privacy exist, their applicability to deepfakes is often unclear and inconsistently enforced, creating a legal grey area ripe for exploitation. The rapid advancement of deepfake technology further exacerbates this issue, demanding a proactive and adaptable response from lawmakers worldwide.

The current legal landscape surrounding deepfakes is a patchwork of existing laws applied in novel ways. Laws against defamation and libel are frequently invoked when deepfakes are used to damage someone’s reputation. Similarly, laws protecting intellectual property rights may be applicable if deepfakes infringe on copyright or trademark. However, proving the intent to deceive or the actual harm caused can be challenging, particularly with sophisticated deepfakes that are difficult to detect. Furthermore, jurisdiction becomes complicated when deepfakes are created and distributed across international borders.

Current Legal Gaps and Limitations

Existing laws often struggle to keep pace with the rapidly evolving capabilities of deepfake technology. One major limitation is the difficulty in proving the origin and intent behind a deepfake. Current laws often require demonstrating malicious intent, which can be difficult to establish when the deepfake is cleverly disguised or its creator remains anonymous. Another limitation lies in the enforcement of existing laws across international borders. Deepfakes can be easily disseminated online, making it challenging to identify and hold accountable those responsible for their creation and distribution. The lack of clear legal definitions of what constitutes a “deepfake” further complicates matters, hindering effective legal action.

Examples of Proposed Legislation and Regulatory Initiatives

Several countries and regions are actively exploring legislative and regulatory initiatives to address the challenges posed by deepfakes. The European Union’s proposed AI Act, for example, includes provisions aimed at regulating high-risk AI systems, which could encompass deepfakes used for malicious purposes. In the United States, various bills have been introduced at both the state and federal levels, focusing on issues such as disclosure requirements for deepfakes used in political advertising or the development of technological solutions to detect and mitigate the spread of deepfakes. These initiatives highlight a growing global recognition of the need for proactive legal frameworks to manage the risks associated with deepfake technology. For example, California’s AB 730 requires social media platforms to disclose information about deepfake content.

Recommendations for Improving Legal and Regulatory Frameworks

To effectively manage the risks associated with deepfakes, a multi-pronged approach is necessary. This includes:

- Clearer Legal Definitions: Establishing clear legal definitions of “deepfake” and related terms is crucial to provide a common understanding and facilitate consistent enforcement of laws.

- Enhanced Detection and Reporting Mechanisms: Investing in research and development of robust deepfake detection technologies and establishing efficient reporting mechanisms to quickly identify and address harmful deepfakes are essential.

- International Cooperation: International collaboration is crucial to address the transnational nature of deepfake distribution and ensure consistent legal frameworks across jurisdictions.

- Focus on Accountability: Legal frameworks should focus on holding creators and distributors of malicious deepfakes accountable for their actions, regardless of their location or anonymity.

- Promoting Media Literacy: Public education campaigns are needed to increase awareness about deepfakes and enhance critical thinking skills among the public, enabling them to better discern authentic content from manipulated material.

Public Awareness and Education Regarding AI Deepfakes

Source: capitalbrief.com

The proliferation of AI deepfake technology presents a significant threat to individuals, organizations, and society as a whole. Misinformation spread through realistic but fabricated videos and audio can erode trust, damage reputations, and even incite violence. Therefore, a robust public awareness campaign is crucial to equip individuals with the tools to identify and critically assess the authenticity of digital media. Educating the public isn’t just about spotting fake videos; it’s about fostering media literacy and critical thinking skills in the digital age.

The potential for harm from deepfakes is immense. Consider the impact of a deepfake video falsely depicting a political candidate admitting to a crime or a celebrity endorsing a harmful product. The consequences could range from electoral manipulation to financial losses and reputational damage. Understanding the technology’s capabilities and limitations is the first step in mitigating these risks.

Examples of Effective Public Awareness Campaigns

Several organizations have begun initiatives to raise public awareness about deepfakes. For example, some educational institutions have incorporated deepfake detection into their media literacy curriculum. These programs often involve interactive workshops where students are exposed to various examples of deepfakes and learn techniques to identify them. Furthermore, some media outlets have produced documentaries and news segments that educate viewers about the technology and its potential consequences. These campaigns have largely focused on highlighting the technical aspects of deepfakes, demonstrating how they are created and identifying tell-tale signs of manipulation, such as unnatural blinking or inconsistent lighting. The effectiveness of these campaigns is still being evaluated, but they represent a crucial first step in a larger effort.

A Public Education Program for Deepfake Detection

A comprehensive public education program should incorporate several key elements. Firstly, it should provide a basic understanding of AI deepfake technology, explaining how it works and its potential applications, both benign and malicious. Secondly, it should teach individuals how to identify common indicators of deepfakes, such as inconsistencies in facial expressions, unnatural movements, and audio-visual discrepancies. This could involve interactive online modules, short videos, and downloadable guides with practical examples. Thirdly, the program should emphasize the importance of source verification and critical thinking. People should be encouraged to question the authenticity of online content and to cross-reference information from multiple reliable sources. Finally, the program should promote responsible online behavior, encouraging users to report suspected deepfakes and to avoid sharing unverified content.

Impact of Increased Public Awareness, Ai deepfakes companies executives academics

Increased public awareness of AI deepfakes has the potential to significantly mitigate their harmful effects. By equipping individuals with the skills to identify and critically evaluate deepfakes, we can reduce the spread of misinformation and protect against the manipulation and exploitation that deepfakes enable. A more informed public is less likely to be swayed by deepfake propaganda, and this increased skepticism can serve as a powerful deterrent against malicious use of the technology. Furthermore, widespread awareness can put pressure on social media platforms and other online services to implement more effective detection and removal mechanisms for deepfakes. The collective action of an informed citizenry is crucial in shaping a digital landscape where deepfakes pose less of a threat.

Final Summary

Source: co.uk

The threat of AI deepfakes is real, and it’s evolving faster than many realize. The future of trust in the digital age hinges on a multi-pronged approach: robust technological defenses, proactive corporate strategies, insightful academic research, and a well-informed public. The collaboration between companies, executives, and academics is not just crucial—it’s paramount to navigating this complex landscape and securing a future where truth prevails over deception. The stakes are high, but with concerted effort, we can build a more resilient and trustworthy digital world.